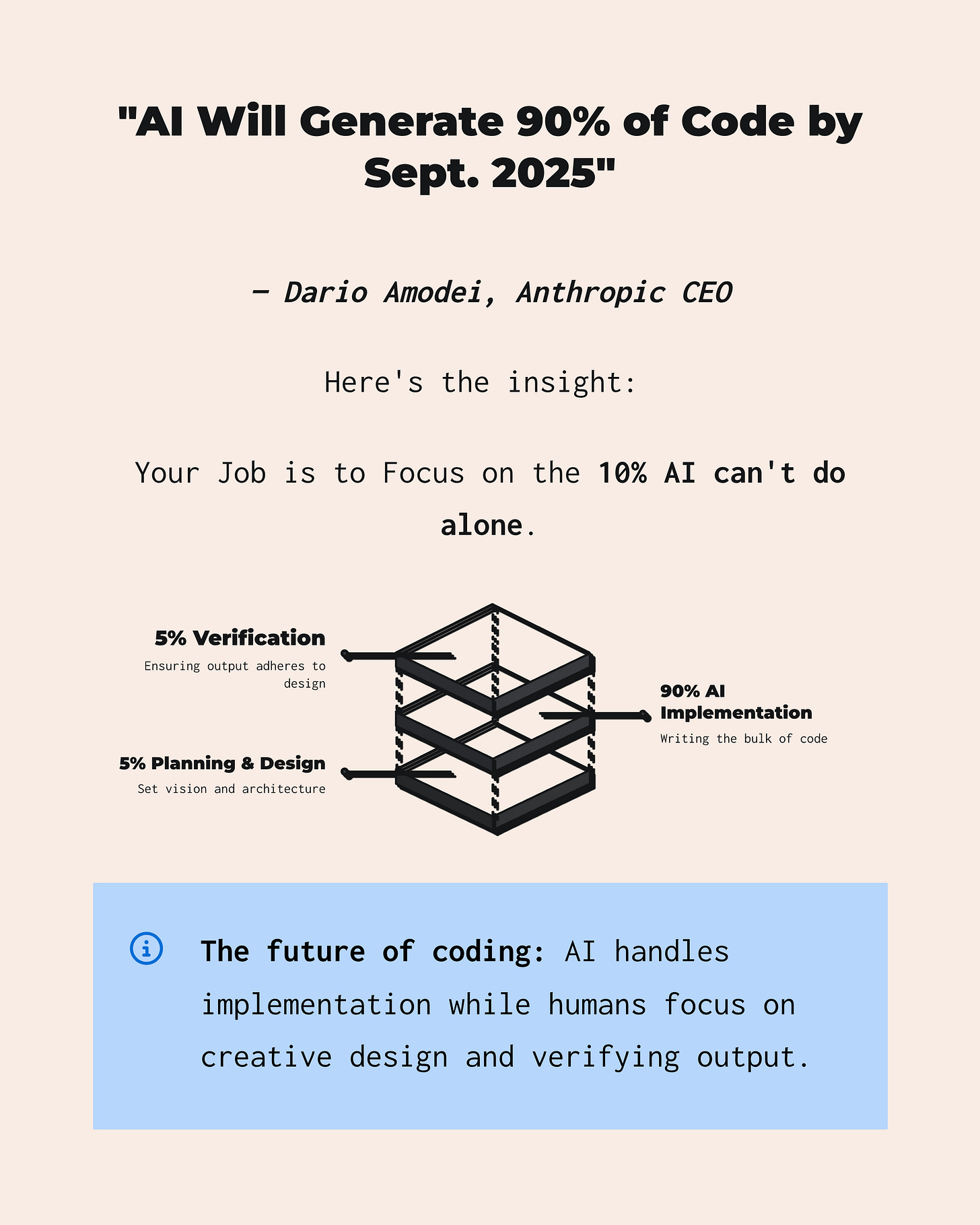

AI Can Write 90% of Code Today. Your Job is to Put 100% into the Remaining 10%.

Some tips for how you can do that.

We are at the point, today, where AI can write 90% of code. In my personal experience, AI is generating more than 90% of my code. I can’t remember the last time I wrote out code by hand, unassisted by a prompt to a coding agent.

Where does that leave developers? It leaves you with the opportunity to focus 100% on the remaining 10% that AI cannot do.

That is: Planning & Design and Verification.

1. Planning & Design

Recently started doing a major rewrite of my system “Meta Agent”. It’s been about six months since I released v1. Much has changed in that six months so I wanted to do a rewrite and see how much more effective the SOTA coding agents are today (of course I’m using Amp).

What I did was open up ChatGPT and start talking with GPT-5 Pro. I explained that I was working a rewrite of Meta Agent, and that I wanted to have a discussion with it in order to develop planning documentation to feed to my coding agent.

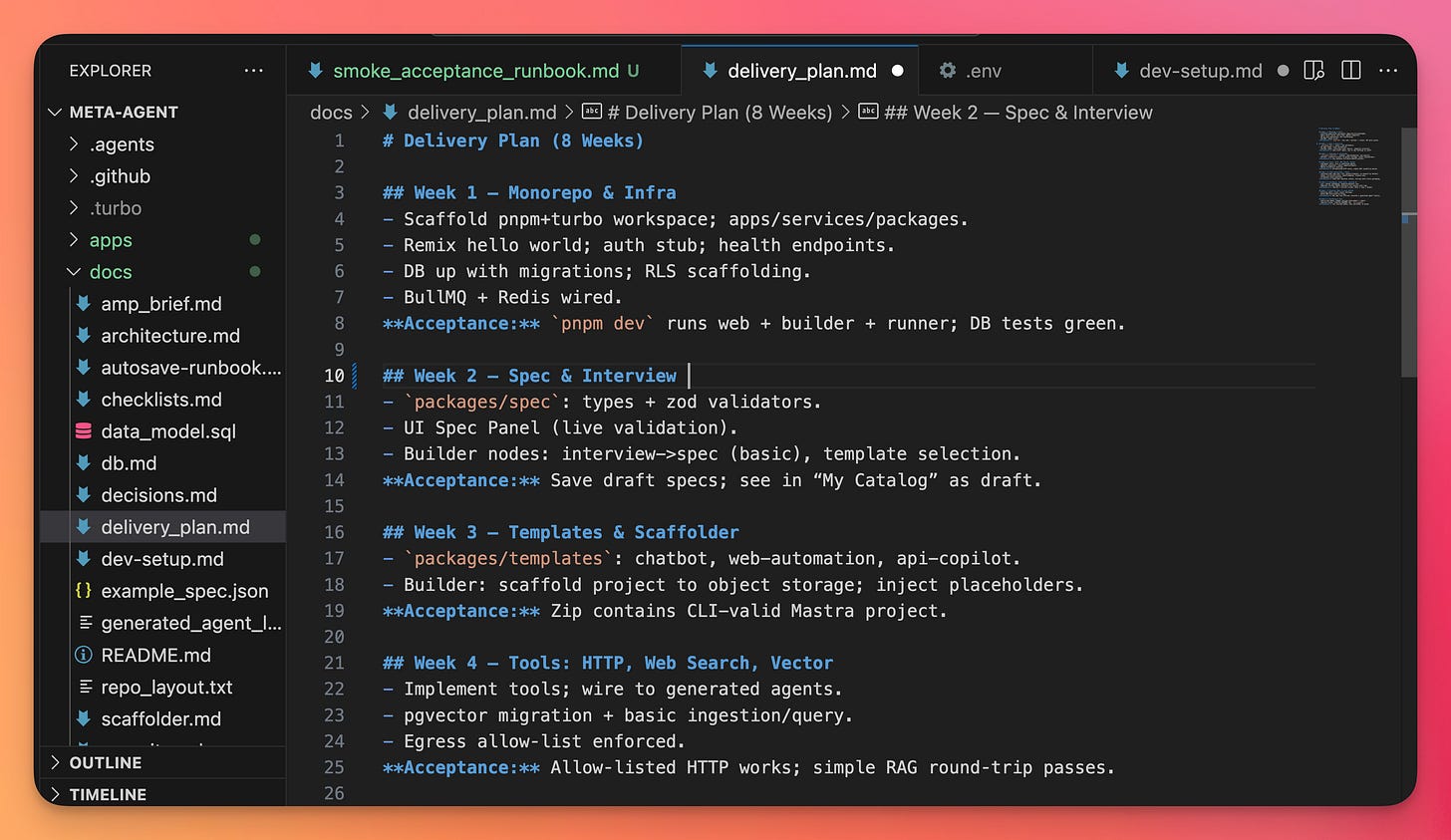

The conversation wasn't that long, and doing this is really helpful because in the end you get useful planning documentation, like in this screenshot here:

One of the keys is to get a delivery plan. With a delivery plan, you can then tell your agent to go step-by-step by using each point in the delivery plan to create a more detailed plan for how to implement that step.

It works really well for keeping your agent on track. You can get more complex with this, and that could work well too. But I found that you can keep it pretty simple and it’s still effective.

The documents produced by the planning & design phase, talking to GPT-5 Pro, also include things like:

An architecture diagram

Product decisions

Checklists to check before pushing changes

Database design

An example data model

There's a lot here for your agent to work with.

Once I have all of the planning & design documentation loaded into my local repo, I start working with Amp.

I say, "Hey, look at the first bullet point of Week 1 and create a step-by-step detailed implementation plan for that bullet point." I review the plan, usually it's spot on. And if it is, I just say "Proceed with the implementation."

That brings us to the next phase that you want to be involved in…

2. Verification

Today's coding agents that are using the SOTA AI models can work for pretty long durations. A lot can happen between when you tell it to go ahead and implement your plan and when it comes back to you with some code, documentation, or decisions that it made.

It is crucial to verify that what the AI generated is actually what you designed and planned on creating.

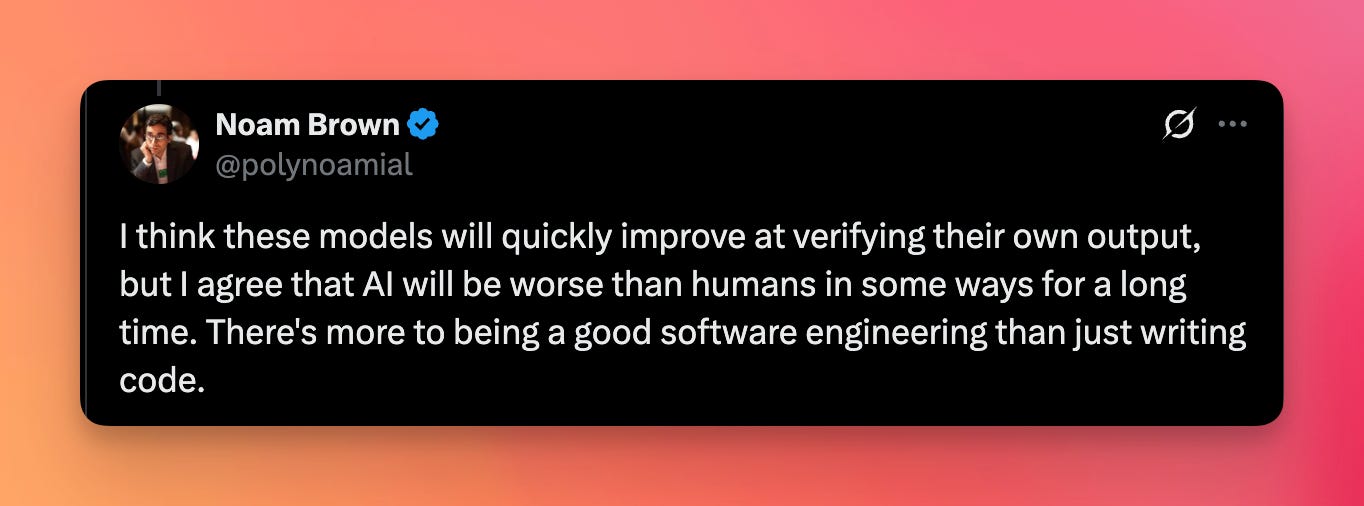

This is less fun than doing the planning & design, but it is still necessary. However, OpenAI researcher Noam Brown does think that AI will continue to get better at verifying its own output:

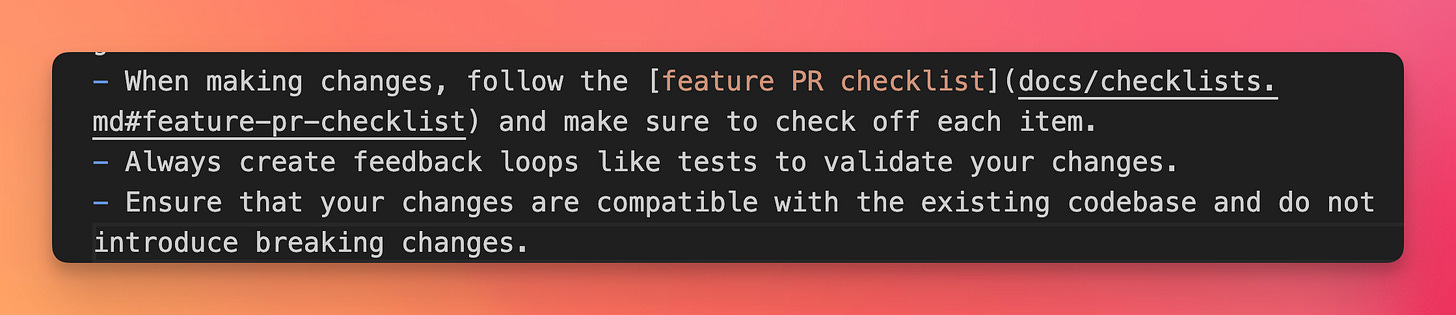

You can instruct your coding agent to review its work in your AGENTS.md file, to write tests and to run those tests to verify that the generated code works:

What you want to do is create feedback loops so your agent can identify issues before you push them to prod.

OpenAI also released GPT-5-Codex this week (9/15/25) which was highlighted as being strong at code review.

I posted about how I set up a specialized subagent “codex-code-review” that uses the Codex CLI within Amp to do code review. It works great!

All of this is just talk unless it actually produces software that works. I encourage you to have a look at the Meta Agent repo. I created this v2 of Meta Agent entirely using this way of working with agents. Not quite done yet, but very close!