The Machine that Builds the Machine

How to use Codex, Linear, GitHub and Symphony to build a code factory

It all started in 2021 with GitHub Copilot and GPT-3-Codex. It was nothing more than auto-complete on steroids. But you’d squint, and see where AI was headed.

The destination was a world where you can build what you imagine.

You have arrived.

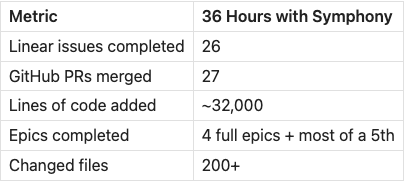

I’m writing this from the other side of a 36-hour window where AI agents autonomously completed 26 engineering tasks, merged 27 pull requests, and shipped 32,000 lines of production code to my open-source project. I slept through part of it.

This is not a demo. This is not a proof of concept. This is how I build software now. And I’m going to show you exactly how it works so you can do it too.

OpenAI’s Symphony: A Machine Intelligence to Build Machines

OpenAI quietly open-sourced something called Symphony. It’s an Elixir-based orchestrator, and it fundamentally changes the relationship between a human and a codebase.

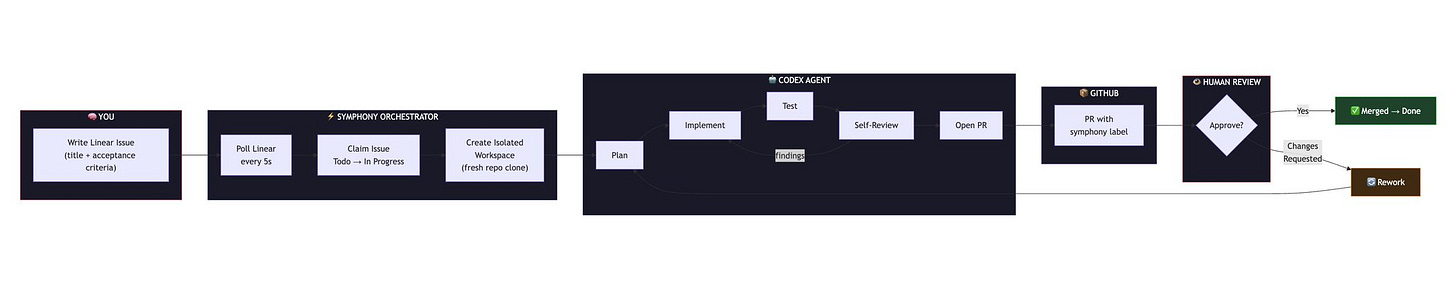

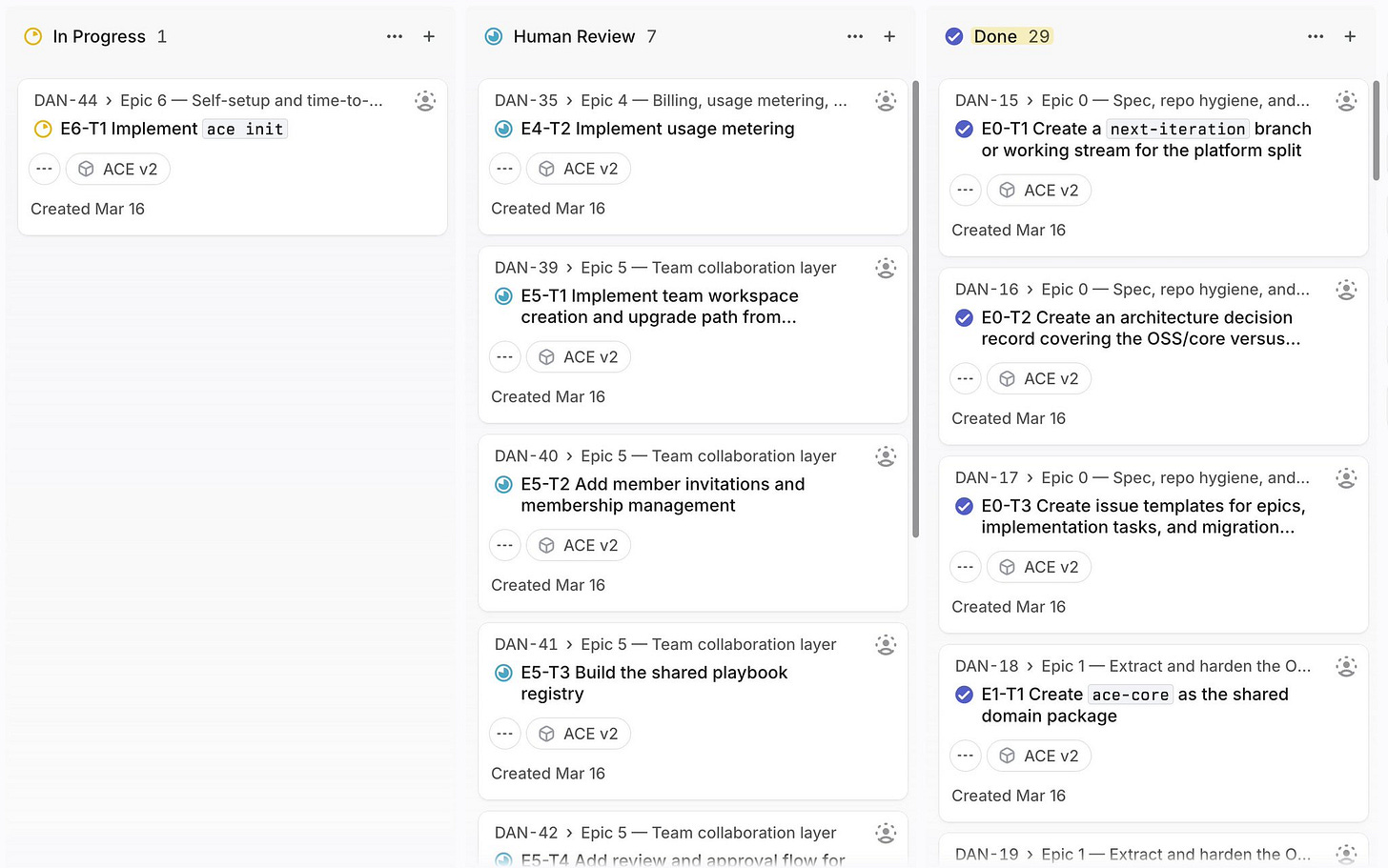

Here’s the loop: you write a ticket in Linear describing what you want built. Symphony polls Linear, picks up that ticket, clones your repository into a fresh isolated workspace, and launches a Codex agent in app-server mode to implement it. The agent plans, codes, tests, opens a pull request, runs a self-review, addresses its own feedback, and only then moves the issue to “Human Review” for you to look at.

Your role in the digital creation process completely transforms.

You go from typing in code to ideating on creative direction.

The only limitation is your ability to specify an outcome.

That last part is key. You’re not prompting anymore. You’re not babysitting AI agents. You’re defining outcomes and letting machine intelligence figure out the implementation. It’s the difference between driving the car and telling the car where to go.

Symphony workflow diagram

The Architecture: How It All Fits Together

Four systems. That’s all it takes.

Linear is your task queue. You create issues — with clear titles, descriptions, and acceptance criteria — and drop them into “Todo.” That’s the last time you think about them until a PR shows up for review. You write what you want, not how to build it.

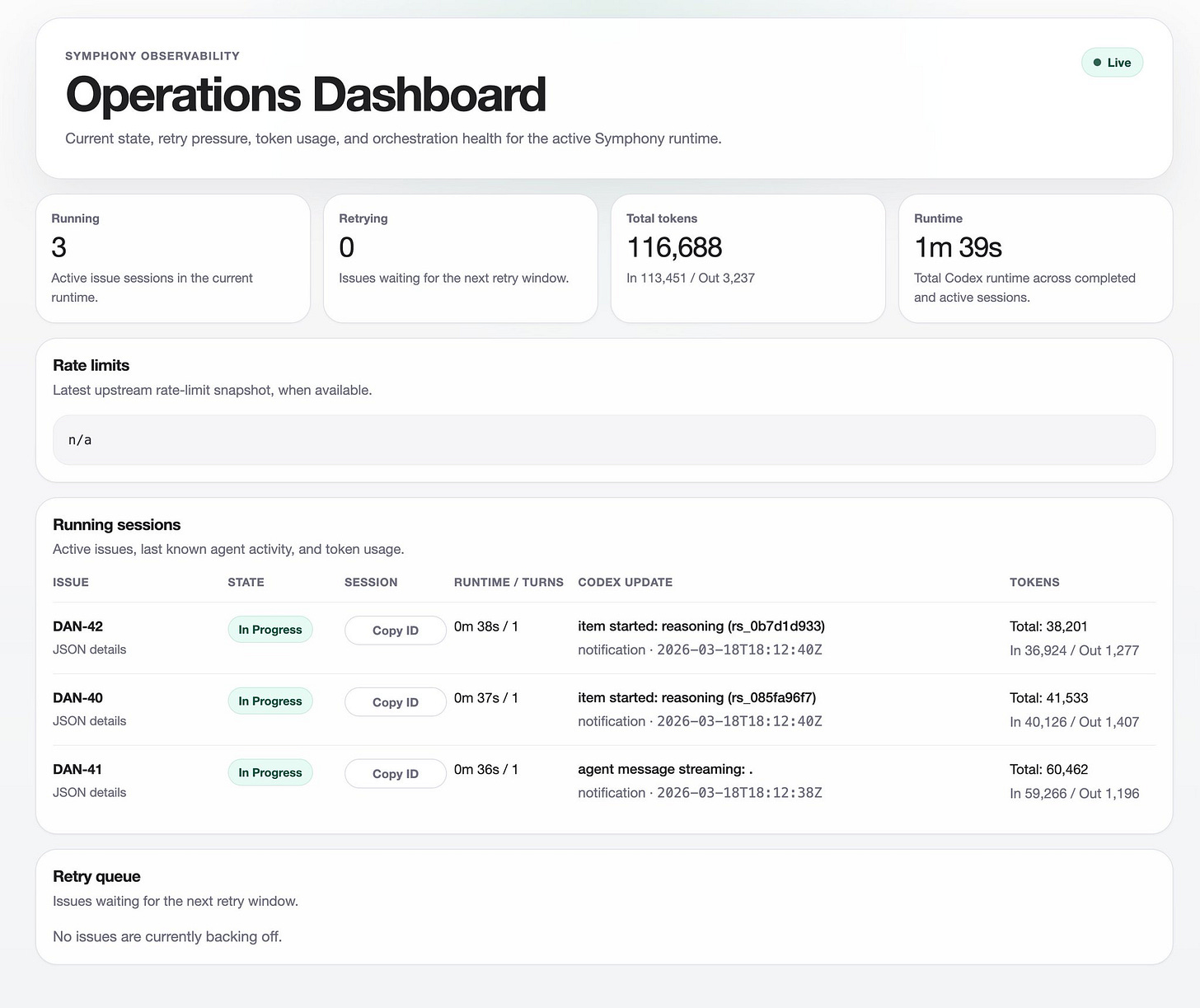

Symphony is the orchestrator. Built on Elixir and OTP (Erlang’s concurrency framework — battle-tested in telecom infrastructure), it polls Linear every five seconds looking for work. When it finds a “Todo” issue, it claims it, immediately transitions it to “In Progress,” and spins up an isolated workspace. It can manage up to ten concurrent agents (or more, that’s my config), each working on a different issue in parallel. It handles the full state machine: Todo → In Progress → Human Review → Merging → Done. When a human approves a PR, Symphony even handles the merge.

Codex is the coding agent. It runs in Codex’s app-server mode with full sandbox access to its isolated workspace — its own clone of the repo, its own virtual environment, its own git branch. It doesn’t just generate code. It plans. It reproduces issues before fixing them. It writes tests. It commits with clean messages. It opens PRs. It runs an automated self-review loop and addresses its own findings before asking a human to look. If a reviewer leaves feedback, it enters a rework cycle and starts fresh.

GitHub is where the code lands. Every PR gets a symphony label so you can filter agent-generated work. The automated self-review protocol sweeps PR comments, inline review feedback, and CI check results before the issue ever moves to human review. By the time you see a PR, the agent has already done at least one full round of quality assurance on its own work.

The guardrails matter more than the capabilities. Each issue gets its own full repo clone — agents can’t interfere with each other’s work. A “Linear Guard” I built validates that Symphony is pointed at the correct Linear workspace (because at one point it wasn’t 😅) and team before it launches, so you don’t accidentally run ten agents against the wrong project. The state machine prevents agents from stepping on each other or making changes during human review.

Focus on Outcomes, Not Inputs

Here’s the old way of working with AI coding tools: you write a prompt, babysit the agent, copy-paste the output, fix the hallucinations, and repeat. Over and over. You’re still doing too much typing in this scenario.

Here’s the new way: you write a Linear issue with clear acceptance criteria. You walk away. You come back to a pull request ready for review.

The workflow file is the real magic. It’s a Jinja2 template — over 300 lines of battle-tested instructions — that gets injected with the issue context every time an agent starts a task. It tells the agent how to work: reproduce the issue before fixing it, write a plan in a persistent workpad comment, check off items as they’re completed, run validation before every push, sweep all PR feedback before requesting human review, and never expand scope without filing a follow-up issue.

You define process rather than specific details. The next-level up in abstraction.

This is the shift from “AI-assisted coding” to “AI-managed engineering.” You’re not pair programming. You’re running a team of competent, always-on digital intelligences. And just like managing a team of human engineers, the quality bar is set by YOUR issue creativity and precision. Better, well-specified Linear tickets equal better output. Vague tickets produce vague work. This forces you to think like a product manager, which — counterintuitively — makes you a better engineer.

The irony is beautiful: the skill that matters most in an age of AI coding agents is the ability to write clearly about what you want.

The Numbers: Before and After

I’m not going to just tell you this works. I’m going to show you the receipts.

Here’s what Symphony actually produced on my ace-platform repository in a single 36-hour window on March 17-18, 2026. These numbers are pulled straight from Linear and GitHub — you can verify them yourself on the public repo.

How long would this take a solo developer writing code by hand?

Estimates: Each of these tasks is a 1-3 day feature for an experienced engineer. At two days average, that’s roughly 52 engineering days — about 2.5 months of full-time solo work. For a five-person team, it’s still 2-3 weeks.

Symphony did it in 36 hours. While I slept through part of it.

The Honest Caveats

I would be doing you a disservice if I made this sound like magic with no tradeoffs. There are real costs and real limitations.

Symphony is token-hungry. Running ten concurrent Codex agents burns through tokens fast. Each agent is doing multi-turn reasoning over a full codebase — planning, implementing, testing, self-reviewing, revising. That’s a lot of tokens. This is not free, and you should model the cost before committing to this workflow at scale.

Not every PR was perfect on the first pass. Some needed rework cycles. The automated self-review loop catches a lot — formatting issues, missing tests, logical errors it can identify by reasoning about its own code. But it doesn’t catch everything. Subtle architectural decisions, product-level judgment calls, and integration issues that only surface in a running system still require human eyes.

Garbage in, garbage out still applies. If you write a one-sentence issue with no acceptance criteria, you’ll get a one-dimensional implementation. The quality of Symphony’s output is directly proportional to the quality of your issue writing. The agents are doing what you told them to do. If you told them poorly, that’s on you.

Human review is still mandatory. Symphony moves issues to “Human Review” when it’s done, and you still need to actually review the code. This is not “set it and forget it.” It’s “set it, do other things, and then review the output.” The time savings are enormous, but the responsibility remains yours.

Try It Yourself

Everything I just described is open source. The ace-platform repo has the full Symphony integration wired up as a working reference implementation.

Here’s the quick start:

Clone the repo:

Copy the example workflow: cp WORKFLOW.example.md WORKFLOW.local.md

Edit WORKFLOW.local.md to set your Linear project slug and your repo clone URL

Set your Linear API key: export LINEAR_API_KEY=lin_api_...

Run: ./scripts/run-symphony.sh

Create a Linear issue and watch it get built

The WORKFLOW.example.md file is the playbook — over 300 lines of battle-tested agent instructions that took real iteration to get right. It includes PR feedback sweep protocols, automated self-review loops, rework handling, scope guardrails, and a full state machine for issue lifecycle management. You can use it as-is or customize it for your own workflow.

Here’s the meta part: ace-platform itself is built on the ACE (Agentic Context Engineer) architecture — a three-agent system with a Generator, Reflector, and Curator that creates self-improving AI playbooks. So you’re using AI orchestration to build an AI orchestration platform. The machine that builds the machine, in a very literal sense.

Knowledge Work is Changed Forever

Software engineering is being automated. Not in five years. Now. The people who learn to orchestrate agents will build 10-100x more than those still writing code line by line — or even those generating code prompt by prompt. The gap isn’t between people who use AI and people who don’t. It’s between people who manage AI and people who prompt AI. Prompting is the old paradigm (only about a year old, though). Orchestration is the new one.

‘26, ‘27, ‘28 are the last few years where traditional knowledge work looks the same. After that, every field gets this treatment. First, it becomes possible for any knowledge work field. Then, for every field of work. The pattern is always the same: the task gets decomposed, the specifications get formalized, the execution gets automated, and the human moves up to the judgment layer.

Entrepreneurship is about to explode. A solo founder with Symphony can ship what used to take a 10-person engineering team. Maybe even a 100-person team. The bottleneck moves from “can I build this?” to “should I build this?” And that second question is far more interesting than the first.

You can build things in minutes that used to take months.

Software ate the world and now AI is eating software. The pace of progress will increase globally. We are headed toward a fast-takeoff if we don’t screw it up. It will be a bumpy ride but ultimately create abundance for humanity. Try to be less afraid.

The new skill stack is outcome definition, acceptance criteria writing, workflow design, and agent orchestration. Not syntax. Not frameworks. Systems thinking. Your knowledge work job will go from completing logical tasks to specifying desirable outcomes. Judgment in identifying what is genuinely valuable to do will become the most important thing.

This is the machine that builds the machine.

And it’s open source.

And it’s here.

If you want to try Symphony yourself, the full setup is at github.com/DannyMac180/ace-platform.

Follow me @daniel_mac8 for more on building with AI agents.